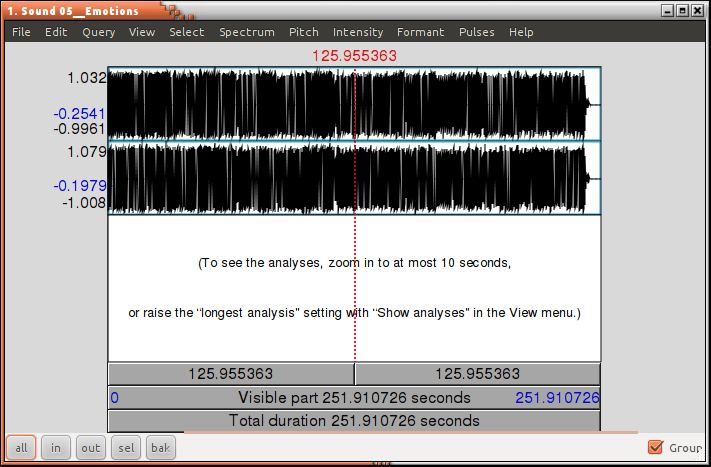

The grayed ovals represent operations implemented in the tool, while the grayed rect-angles represent the basic elements. Procedures to obtain the basic elements that are directly needed for prosodic feature extraction. The requirements needed for the tool and its basic elements and implementation is shown below: Then a set of duration statistics (e.g., the means and variances of pause duration, phone duration, and last rhyme duration), F0 related statistics (e.g., the mean and variance of logarithmic F0 values), and energy related statistics are calculated. USING THE TOOL: Here a step by step procedure is given for extracting the prosodic features from a corpus with audio and time aligned words and phones as input, our tool _rst extracts a set of basic elements (e.g., raw pitch, stylized pitch, VUV) representing duration, F0, and energy information, as is shown in Figure 1 (c). Currently the gender information is provided in a metadata _le, rather than obtaining it via automatic gender detection. Other features: We add the gender type to our feature set. We also include the slope difference and dynamic patterns (i.e., falling, rising, and unvoiced)across a boundary as slope features, since a continuous trajectory is more likely to correlate with non-boundaries whereas, a broken trajectory tends to indicate a boundary of some type.Įnergy features: The energy features are computed based on the intensity contour produced by Praat.Similar to the F0 features, a variety of energy related range features, movement features, and slope features are computed, using various normalization methods. The last slope value of the word preceding a boundary and the _rst slope value of the word following a boundary are computed. Slope features: Pitch slope is generated from the stylized pitch values. The minimum,maximum, mean, the _rst, and the last stylized F0 values are computed and compared to that of the following word or window, using log difference and log ratio. Movement features: These features measure the movement of the F0 contour for the voiced regions of the word or window preceding and the word or window following a boundary. Theseįeatures are also normalized by the baseline F0 values, the topline F0 values, and the pitch range using linear difference and log difference. These include the minimum, maximum, mean, and last F0 values of a speci_c region (i.e., within a word or window) relative to each word boundary. Range features: These features re_ect the pitch range of a single word or a window preceding or following a word boundary. Several different types of F0 features are computed based on the stylized pitch contour. Voiced/unvoiced (VUV) regions are identi_ed and the original pitch contour is stylized over each voiced segment. The pitch baseline and topline, as well as the pitch range, are computed based on the mean and variance of the logarithmic F0 values. The duration and the normalized duration of each word are also included as duration features.į0 features: Praat's autocorrelation based pitch tracker is used to obtain raw pitch values. Pause duration and its normalization after each word boundary are extracted.We also measure the duration of the last vowel and the last rhyme, as well as their normalizations, for each word preceding a boundary. In addition to the word spoken, the prosodic content of the speech has been proved quite valuable in a variety of spoken language processing tasks such as sentence segmentation and tagging, dis_uency detection, dialog act segmentation and tagging, and speaker recognition.Here in this project I used an open source prosodic tool for extracting the prosodic analysis.This tool uses praat for its implementation.ĭuration features: Duration features are obtained based on the word and phone alignments of human transcriptions (or ASR output). INTRODUCTION: There has been an increasing interest in utilizing a wide variety of knowledge sources in order to perform automatic tagging of speech events, such as sentence boundaries and dialogue acts.